I have just gotten back from attending the 19th International Conference on Computational Urban Planning and Urban Management (CUPUM) in London and thought I would share the two papers we presented at the conference.

The first paper was with

Qingqing Chen and

Linda See and was entitled "

Using New Sources of Data for Urban Climate Modeling Generated through MLLMs on Street View Imagery. "As the title might suggest, this paper was about how one can leverage multi-modal large language models (MLLMs) to extract information on building height, age and function from street level photographs. We demonstrate this using street view images from Mapillary and than ask ChatGPT to estimate the building height, age and function and compare the results to authoritative data sources. If this sounds of interest, below you can see the abstract to the paper, some if the figures (i.e., the work flow and prompts) while the results can be seen in the attached paper (see the link below).

Abstract:

Urban climate and energy balance models require data on the form and function of buildings, but high resolution spatially explicit data sets are often lacking. Here we demonstrate how multi-modal large language models (MLLMs) can be used to extract information on building height, age and function from street level photographs for New York City. A workflow is presented that illustrates the approach, with initial results indicating that the building function can be identified with good accuracy while moderate accuracies were obtained for building heights and age. Suggestions for how to improve these accuracies are also provided.

KEYWORDS: Buildings, ChatGPT, Multi-modal Large Language Models (MLLMs), Mapillary, Street View Images (SVI).

|

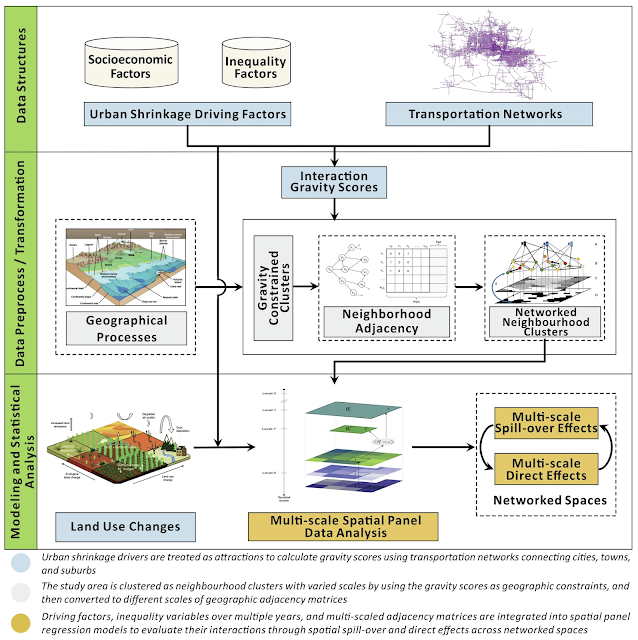

| An overview of research workflow. |

|

| The detailed description of multi-step prompting and an example of extracted building attributes information. |

Full Reference:

Chen, Q., See, L. and Crooks, A.T. (2025), Using New Sources of Data for Urban Climate Modeling Generated through MLLMs on Street View Imagery. In Cramer-Greenbaum, S., Dennett, A., and Zhong, C (eds.), Proceedings of the 19th International Conference on Computational Urban Planning and Urban Management (CUPUM), London, UK. (pdf)

We then moved back to agent-based modeling with a paper with entitled "Enhancing Spatial Reasoning and Behavior in Urban ABMs with Large-Language Models and Geospatial Foundation Models" which brought back together Nick Malleson, Alison Heppenstall, Ed Manley and myself. In this paper we discuss the potential role of LLMs and geospatial foundation models in the context of agent-based modeling. If this sounds of interest, below you can read the abstract to the paper and find a link to it at the bottom of the post. Nick has also shared the slides of this presentation here.

Abstract:

Modeling human behavior continues to be a significant challenge for the field of agent-based modeling, and one that prohibits the development of comprehensive empirical ABMs for urban applications, such as Urban Digital Twins. However, two recent methodological advances offer the potential to transform empirical agent-based models.

Early evidence suggests that large-language models (LLMs) can be used to represent a wide range of human behaviors, with models responding in realistic ways to given prompts. Indeed there is already a flurry of activity that focusses on implementing LLM-backed agents -- i.e. agents who are controlled by LLMs. At the same time, the concept of the foundation model is also being applied in domains beyond text analysis. Of particular interest are geospatial foundation models that automatically encode spatial data in such a way as to associate different spatial objects in numerous and nuanced ways that have otherwise alluded manual classification schemes. Taken together, these two technologies offer considerable potential for a new generation of agent-based models that contain agents who can behave in response to spatial and social prompts in a way that is realistic and has so far proven impossible to replicate using manually-programmed behavioral rules.

This paper presents a discussion of the state of the art in both LLMs and geospatial foundation models in the context of their potential role in agent-based modelling. It discusses the transformational potential of these technologies and outlines the critical questions that need to be addressed before they can be used to create robust, reliable and trustworthy models for empirical policy applications that support decision-making.

KEYWORDS: Agent-based Modeling; Large language model; Geospatial foundation model; Urban Modeling.

Full Reference:

Malleson, N., Crooks, A.T., Heppenstall, A. and Manley, E. (2025), Enhancing Spatial Reasoning and Behavior in Urban ABMs with Large-Language Models and Geospatial Foundation Models. In Cramer-Greenbaum, S., Dennett, A., and Zhong, C (eds.), Proceedings of the 19th International Conference on Computational Urban Planning and Urban Management (CUPUM), London, UK. (pdf)